Welcome to the 7th newsletter for 2026!

A quick note before we get started: If you are new here, welcome. I use this space to write through what I am seeing and learning across warehouse design and automation as the industry evolves.

Why "Scalable" Is a Lie We Tell Ourselves

I was on the phone recently with an industry veteran, talking through the pros and cons of pick assist AMRs. Smart conversation, the kind where you're both nodding even though you're on a call.

Got me thinking about scalability. Not the version vendors pitch - the version that actually shows up on the warehouse floor.

Because here's what keeps happening: Vendor slides show 300 bots at 99.8% utilization. ROI model scales linearly. Everyone nods. Then you deploy 120 bots in a real warehouse during peak and watch the whole thing cascade into recovery in 11 minutes.

I've been in that room. Multiple times.

And here's what I've learned: "scalable" is a lie we tell ourselves.

Not because vendors are dishonest. Not because integrators are incompetent. Their systems CAN add more units. Their models DO work in controlled environments. But as you scale bots in high-variation warehouses, these five failure modes just... show up.

None of these failures happen because robots don't work. They happen because we mistake unit scalability for system scalability.

Here are the five breaking points I keep seeing.

1. Charging Infrastructure (The Math Lie)

This one is so predictable it's almost boring - until Black Friday.

Look, the vendor model always assumes perfectly staggered demand. No thermal throttling. Chargers never go offline. Bots politely take turns needing power.

That's not how it works.

At 50-70 bots, opportunity charging looks smooth. You get past 100 and suddenly you're seeing synchronized charging waves. Because here's what nobody tells you: battery burn becomes correlated, not random. Heavy travel period? Every bot takes longer paths. Congestion spike? They're all rerouting and waiting. Heat builds up? They all slow down and burn more power.

Your average charge plan won't save you from synchronized demand spikes.

The "oh shit" moment is when you realize your electrical service was sized for the demo numbers. Not the actual simultaneous charging demand during peak with all the safety factors you should have included.

And if you're already in production facing this? Getting more electrical capacity to a warehouse is a 6-12 month process. Not a one-week fix. I've seen operators running additional shifts just to charge the bots that never got power during the day. Your 'lights out' automation now needs a graveyard shift for charging management.

(We should probably define what "lights out" automation actually means as an industry - because if my definition is right, it's a false fallacy we're all chasing. But that's a different newsletter.)

This is how a 'small' electrical miss becomes a peak throughput miss - and a long, expensive retrofit.

2. Orchestration Complexity (The NP-hard Wall)

Past ~100 bots, the system stops being traffic management and becomes air traffic control. Conflict density goes up. Recovery cascades faster than humans can intervene.

Here's what you'll see when it starts breaking:

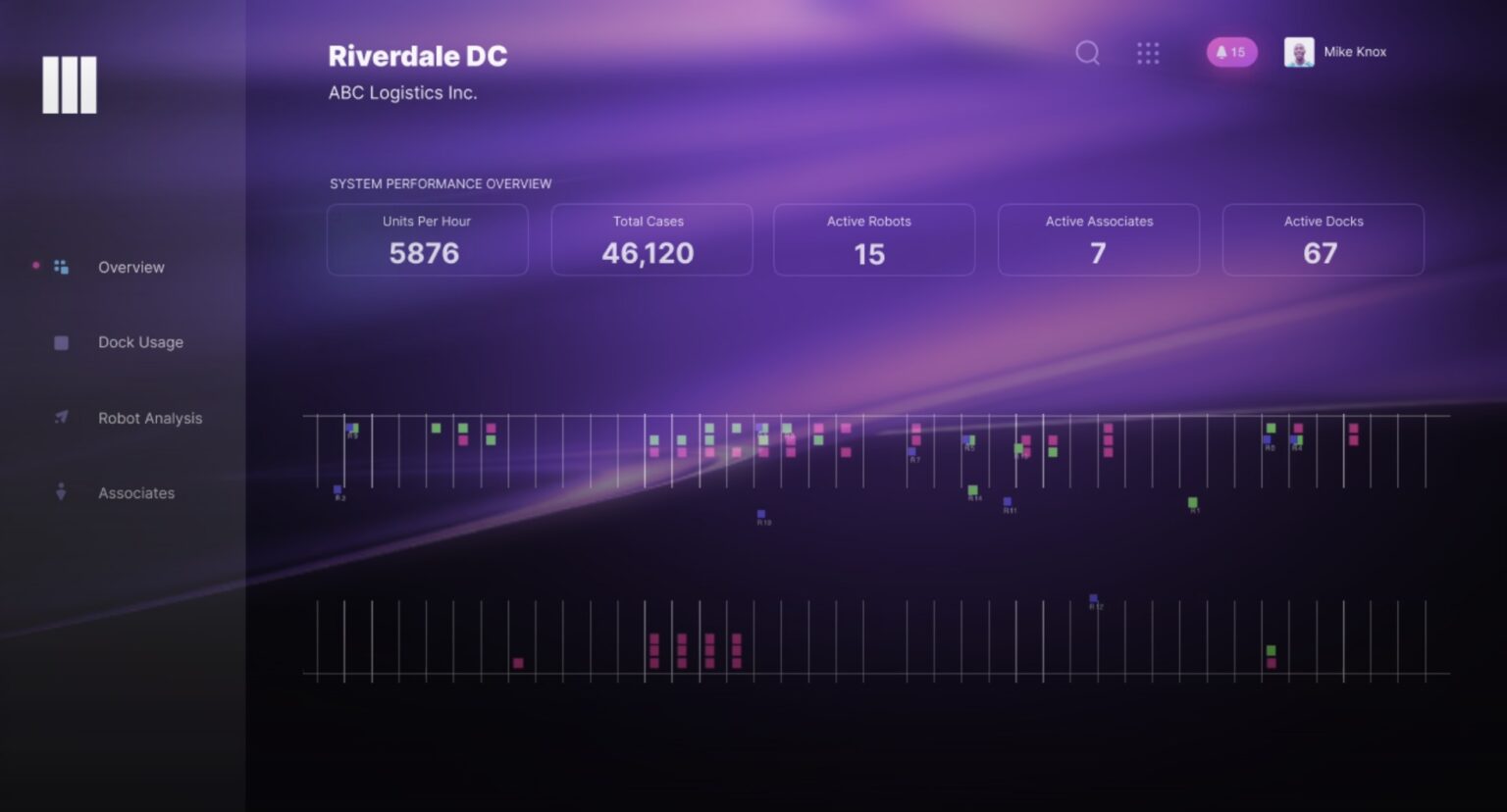

Fleet dashboard says everything's available. But your recovery state percentage is climbing. Replans per minute are exploding because the system is spending more time re-resolving constraints than actually moving bots. Controller starts dropping heartbeats because the message bus can't keep up. And throughput? Collapsing. Even though every utilization metric looks fine.

I watched a 220-bot deployment go from "looking good in the war room" to 40% of the fleet stuck in recovery state. Eleven minutes. That's all it took.

The traffic manager couldn't resolve conflicts fast enough. Bots were rerouting, waiting, rerouting again. The system was drowning in its own attempts to optimize.

We had to carve the warehouse into hard geographic partitions - only 60-70 bots per partition, very limited cross-traffic. It felt like admitting defeat. Like going back to 2018. But it was the only way to stop the cascading stalls.

If operators don't trust recovery at 2 a.m., it doesn't work.

3. Exception Handling (The Human Bandwidth Cliff)

This is the one that kills perceived ROI fastest.

Small scale, it's fine. One person on a scooter clearing jams as they happen. No problem.

Then you scale up. And in high-variation sites, the intervention rates start climbing. You're not running a pick operation anymore. You're running a recovery operation. And exceptions don't spread out nicely - they cluster. One jam leads to congestion, congestion causes more jams, and suddenly you've got four problems at once.

The worst version I've seen? Site with 185 bots. Four-person recovery team. They literally could not physically reach the far side of the building before the next jam happened. So they gave up trying. Created a permanent "bot hospital" zone where robots were just... herded for repair.

Throughput dropped 19% from plan. This is where ROI actually dies.

Not because robots cost too much. Because you're bleeding money on recovery labor and your throughput is all over the place. Some days you hit plan; some days you're 15% short. Cutoffs are missing. SLA penalties start showing up. And then the worst part - leadership starts questioning whether this was a good idea.

This one is almost always the first thing to break, not the last.

Classic pattern:

WMS was written in 2009, handles steady-state traffic comfortably

You show up with 180 bots doing 6-8 transactions per minute

On paper that's ~18-24 transactions per second - but in reality the load multiplies through call amplification, retries, locking, and downstream confirmations

You don't overload the WMS on bot count. You overload it on call amplification + locking + latency compounding.

I've seen:

REST calls that were sub-200ms at 40 bots hitting 2+ seconds at 160 bots

Message queues backing up until the entire orchestration layer went read-only

One site where the WMS started returning success responses with missing or partial payloads because the app server thread pool was exhausted - bots thought everything was fine and kept driving into already-picked locations

What fixes this? Honestly, it depends. I've seen operators upgrade WMS infrastructure, implement rate limiting, batch API calls, or add middleware layers. What works for your 2009 Oracle instance won't work for someone else's modern cloud WMS. The only universal truth: you need to stress-test your enterprise systems at scale before you're in production, not after.

The bottleneck you thought was "just infrastructure" actually kills your ability to ship on time.

5. The Automation Staffing Inversion

The cruelest one.

You can't just hire more people to solve this. The skill curve doesn't work that way.

Tier 1 (monitoring): Sure, one person can watch a lot of bots. Until exceptions start clustering and suddenly every alarm is screaming and nothing means anything anymore.

Tier 2 (recovery): Now you need someone who understands system state. Not just "Bot 47 has an error" - but why Bot 47's error is causing Bots 22, 89, and 103 to stall.

Tier 3 (the robot whisperer): The person who actually understands what error code 0x4A2F means and can fix it in 90 seconds instead of 20 minutes. These people are unicorns. And you will fight other sites for them.

I've seen sites where the automation team grew from 6 people to 28 while the robot count only went from 45 to 165. The labor savings? Gone. Just disappeared into a new, more expensive kind of labor.

If no one owns recovery, automation doesn't scale.

Bottom Line

Look, if you're still in the "we'll just add more bots" phase, you're about to learn these lessons the expensive way.

The vendors will swear it scales. The integrators will show you PowerPoints with hockey-stick curves. And I haven't seen a 200+ bot deployment that didn't require re-architecting at least two of these five areas after going live. Not one.

The ones that succeed? They treat the fleet like a living organism that needs constant surgery. Not like magic boxes you drop on the floor and forget about.

You're not crazy. These issues are real, they're common, and they're why so many "fully automated" warehouses still have 40 people on the floor at 3 a.m. "just in case."

When recovery isn't designed up front, automation doesn't fail quietly. It fails in peak, under scrutiny.

Better questions than "does it scale?":

Where will it break first?

How will we recover when it does?

What re-architecture budget did we include?

What's our recovery plan at 80 bots? At 150?

The answer isn't "don't automate" or "don't scale."

Infinitely scalable is a lie we tell ourselves. Scalable with guardrails and expected changes - that's what's actually achievable.

If you've lived through any of these, you're not alone. That's why I document what actually happens on warehouse floors every Sunday. What have you seen break first?

Hit reply - I'm building a library of real patterns, not vendor case studies. See you next week.

-Parth

News

Thoughts? Questions? Feedback? Reply to this email.